Pixels are for images. Millimeters are for engineering

From the very beginning of our work on camera calibration, I kept coming back to one question: can one calibrated camera really be compared to another?

My friend and partner Alex Pak, who has spent more than ten years deeply involved in calibration, used to say there was no real practical reason for this comparison.

In most calibration workflows, quality is evaluated using RMS Reprojection Error (RPE). During calibration, we collect data, refine the process, and optimize the model to reduce this value as much as possible. RPE measures how accurately a reconstructed 3D point lands on the camera sensor, usually in sub-pixel values.

For a single camera, this makes perfect sense. It helps determine whether one calibration attempt is better than another. But once we try to compare two completely different cameras using RPE, the logic breaks down.

Why? Because pixels are not universal units. The definition of a “pixel” usually depends of internal image processing - cropping, un-distortion, binning, de-Bayering, etc., which may differ from camera to camera. Even for the same camera, a pixel in the center and at the edge of an image may cover very different solid angles in the camera’s view, and have very different sensitivity due to vignetting. Therefore, RPE values are not easily comparable across cameras

This means RPE is useful for optimizing calibration inside one camera system, but it does not tell us how cameras compare in the real world.

Now imagine a different scenario.

Suppose I am building a robot that needs to interact with physical objects. In this case, the camera is not simply an imaging device — it becomes a measurement tool that helps the robot understand space.

The task itself defines the real requirements:

What field of view is needed?

What working distance is required?

What physical accuracy must be achieved?

Choosing a camera based on field of view and working distance is relatively easy. The difficult question is this: which camera can actually deliver the required 3D precision?

RPE does not answer that question, because it measures error on the sensor plane rather than in physical space.

This was the core idea behind our approach.

Instead of only minimizing reprojection error, we focus on minimizing what we call Forward Projection Error (FPE).

Forward Projection Error (FPE)

FPE evaluates how accurately a ray originating from a camera pixel reaches the intended 3D point in the real world. Unlike RPE, FPE is measured in physical units such as millimeters or microns at a specified distance.

This changes the conversation completely.

For example, if a robot must grab objects with an accuracy of ±0.5 mm at a distance of 1 meter, then the camera system should be evaluated based on whether its RMS FPE stays within 0.5 mm at that same 1-meter range.

Now calibration becomes directly connected to the task.

Instead of asking, “Which camera has lower pixel error?” We can ask, “Which camera gives me the physical precision my application actually needs?”

That creates a practical framework for comparing cameras:

Calibrate different models.

Measure their RMS FPE at the required working distance.

Select the one that meets the performance target at the best cost.

This is where calibration stops being purely mathematical and becomes an engineering tool.

A recent experiment Alex conducted illustrates this clearly.

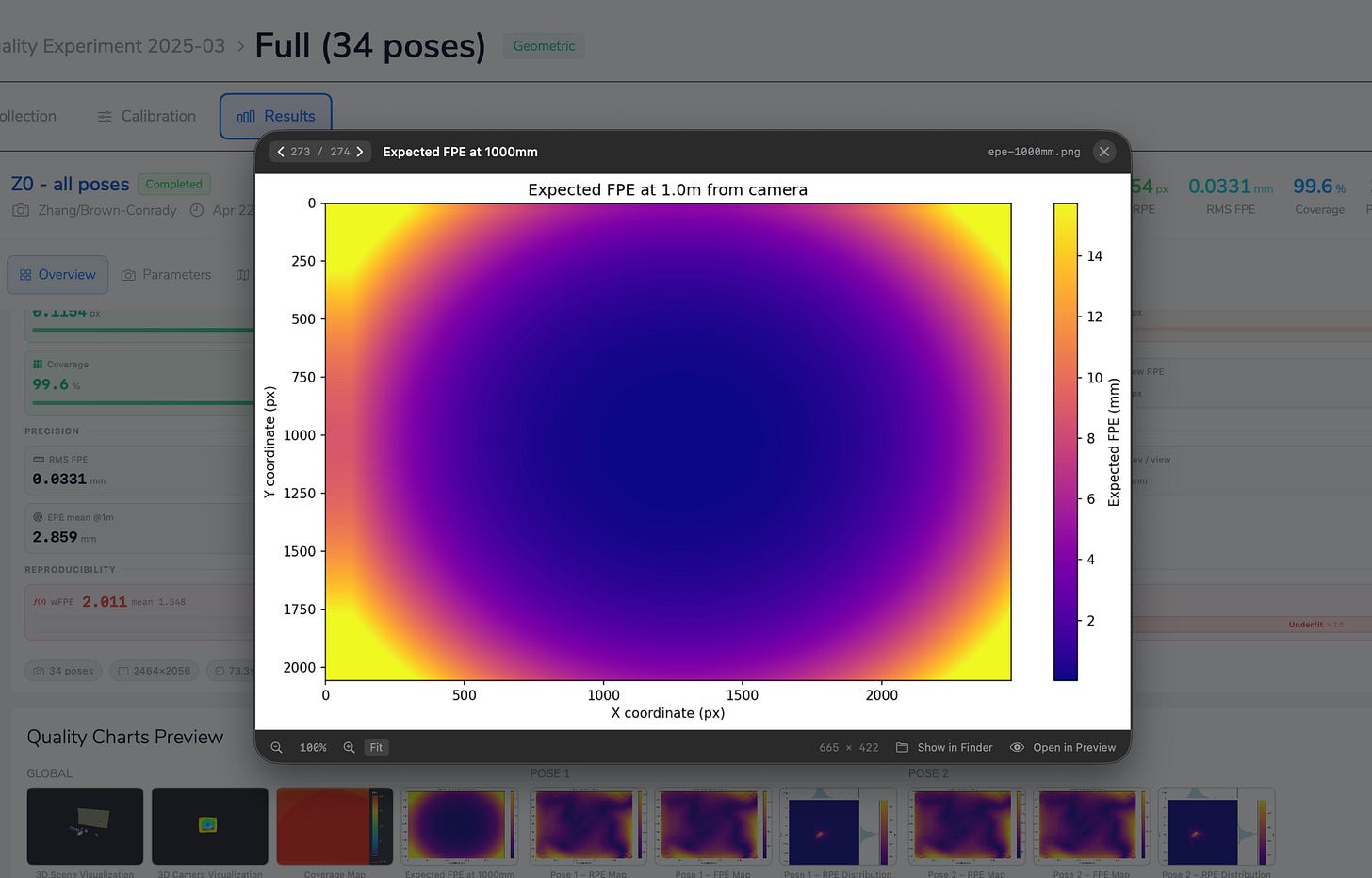

We calibrated a 5MP camera using 34 poses in a highly controlled optical environment.

Using an OpenCV model with 4 + 6 intrinsic parameters, the results were:

RMS RPE: 0.231 px

RMS FPE at 1 meter: 0.033 mm

The RPE confirms that the model fits image-space data very well.

But the FPE tells the more important story: at a 1-meter distance, this camera can achieve approximately 33 microns of physical precision.

For robotics, metrology, and industrial automation, that number is far more meaningful than pixel error alone.

RPE tells us how well the calibration fits the camera sensor.

FPE tells us how well the calibrated camera performs in the real world.